Transformer实现以及Pytorch源码解读(四)-Encoder层

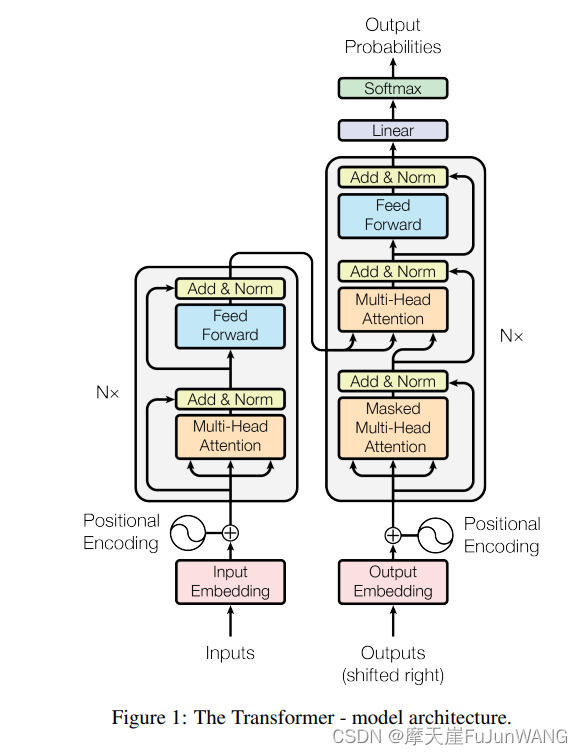

Transformer结构图

先放一张原论文中的图。从inputs到Poitional Encoding在前三部分中已经分析清楚,接下来往后分析。

Pytorch中对Transformer的调用

Pytorch将图1中左半部分的神经网络层用一个TransformerEncdoer(encoder_layer,num_layers)类进行封装,该类的传参有两个:TransformerEncoderLayer(encoder_layer)和堆叠的层数(num_layers)。

class Transformer(nn.Module):

def __init__(self, vocab_size, embedding_dim, hidden_dim, num_class,

dim_feedforward=512, num_head=2, num_layers=2, dropout=0.1, max_len=512, activation: str = "relu"):

super(Transformer, self).__init__()

# 词嵌入层

self.embedding_dim = embedding_dim

self.embeddings = nn.Embedding(vocab_size, embedding_dim)

#其实max_len可以不用设置的太大,只要比最大句子的长度大一些就行了。

self.position_embedding = PositionalEncoding(embedding_dim, dropout, max_len)

# 编码层:使用Transformer

encoder_layer = nn.TransformerEncoderLayer(hidden_dim, num_head, dim_feedforward, dropout, activation)

self.transformer = nn.TransformerEncoder(encoder_layer, num_layers)

# 输出层

self.output = nn.Linear(hidden_dim, num_class)

def forward(self, inputs, lengths):

#注意输入的input,每一列表示一个样本,总共有batch_size列

inputs = torch.transpose(inputs, 0, 1)

hidden_states = self.embeddings(inputs)

hidden_states = self.position_embedding(hidden_states)

attention_mask = length_to_mask(lengths) == False

hidden_states = self.transformer(hidden_states, src_key_padding_mask=attention_mask).transpose(0, 1)

logits = self.output(hidden_states)

log_probs = F.log_softmax(logits, dim=-1)

return log_probs

接下来逐一分析涉及到的两个类

TransformerEncdoer类

该类处于目录\site-packages\torch\nn\modules\transformer下。

class TransformerEncoder(Module):

r"""TransformerEncoder is a stack of N encoder layers

Args:

encoder_layer: an instance of the TransformerEncoderLayer() class (required).

num_layers: the number of sub-encoder-layers in the encoder (required).

norm: the layer normalization component (optional).

Examples::

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8)

>>> transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=6)

>>> src = torch.rand(10, 32, 512)

>>> out = transformer_encoder(src)

"""

__constants__ = ['norm']

def __init__(self, encoder_layer, num_layers, norm=None):

super(TransformerEncoder, self).__init__()

self.layers = _get_clones(encoder_layer, num_layers)

self.num_layers = num_layers

self.norm = norm

def forward(self, src: Tensor, mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Pass the input through the encoder layers in turn.

Args:

src: the sequence to the encoder (required).

mask: the mask for the src sequence (optional).

src_key_padding_mask: the mask for the src keys per batch (optional).

Shape:

see the docs in Transformer class.

"""

output = src

for mod in self.layers:

output = mod(output, src_mask=mask, src_key_padding_mask=src_key_padding_mask)

if self.norm is not None:

output = self.norm(output)

return output

可以发现该类比较简单,主要进行的是对堆叠的操作。首先将拿到的encoder_layer通过_get_clones()函数克隆若干份,然后在forward中进行循环的调用。我们可以看下)_get_clones方法的实现:

def _get_clones(module, N):

return ModuleList([copy.deepcopy(module) for i in range(N)])

可以看出主要是利用copy的deepcopy将模型复制了N份,然后在forward中循环的调用自己。这也说明了TransformerEncoderLayer输入输出的维度必定要一样,可以后文求证。

TransformerEncoderLayer类

class TransformerEncoderLayer(Module):

r"""TransformerEncoderLayer is made up of self-attn and feedforward network.

This standard encoder layer is based on the paper "Attention Is All You Need".

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez,

Lukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in

Neural Information Processing Systems, pages 6000-6010. Users may modify or implement

in a different way during application.

Args:

d_model: the number of expected features in the input (required).

nhead: the number of heads in the multiheadattention models (required).

dim_feedforward: the dimension of the feedforward network model (default=2048).

dropout: the dropout value (default=0.1).

activation: the activation function of the intermediate layer, can be a string

("relu" or "gelu") or a unary callable. Default: relu

layer_norm_eps: the eps value in layer normalization components (default=1e-5).

batch_first: If ``True``, then the input and output tensors are provided

as (batch, seq, feature). Default: ``False``.

norm_first: if ``True``, layer norm is done prior to attention and feedforward

operations, respectivaly. Otherwise it's done after. Default: ``False`` (after).

Examples::

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8)

>>> src = torch.rand(10, 32, 512)

>>> out = encoder_layer(src)

Alternatively, when ``batch_first`` is ``True``:

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8, batch_first=True)

>>> src = torch.rand(32, 10, 512)

>>> out = encoder_layer(src)

"""

__constants__ = ['batch_first', 'norm_first']

def __init__(self, d_model, nhead, dim_feedforward=2048, dropout=0.1, activation=F.relu,

layer_norm_eps=1e-5, batch_first=False, norm_first=False,

device=None, dtype=None) -> None:

factory_kwargs = {'device': device, 'dtype': dtype}

super(TransformerEncoderLayer, self).__init__()

self.self_attn = MultiheadAttention(d_model, nhead, dropout=dropout, batch_first=batch_first,

**factory_kwargs)

# Implementation of Feedforward model

self.linear1 = Linear(d_model, dim_feedforward, **factory_kwargs)

self.dropout = Dropout(dropout)

self.linear2 = Linear(dim_feedforward, d_model, **factory_kwargs)

self.norm_first = norm_first

self.norm1 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.norm2 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.dropout1 = Dropout(dropout)

self.dropout2 = Dropout(dropout)

# Legacy string support for activation function.

if isinstance(activation, str):

self.activation = _get_activation_fn(activation)

else:

self.activation = activation

def __setstate__(self, state):

if 'activation' not in state:

state['activation'] = F.relu

super(TransformerEncoderLayer, self).__setstate__(state)

def forward(self, src: Tensor, src_mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Pass the input through the encoder layer.

Args:

src: the sequence to the encoder layer (required).

src_mask: the mask for the src sequence (optional).

src_key_padding_mask: the mask for the src keys per batch (optional).

Shape:

see the docs in Transformer class.

"""

# see Fig. 1 of https://arxiv.org/pdf/2002.04745v1.pdf

x = src

if self.norm_first:

x = x + self._sa_block(self.norm1(x), src_mask, src_key_padding_mask)

x = x + self._ff_block(self.norm2(x))

else:

x = self.norm1(x + self._sa_block(x, src_mask, src_key_padding_mask))

x = self.norm2(x + self._ff_block(x))

return x

# self-attention block

def _sa_block(self, x: Tensor,

attn_mask: Optional[Tensor], key_padding_mask: Optional[Tensor]) -> Tensor:

x = self.self_attn(x, x, x,

attn_mask=attn_mask,

key_padding_mask=key_padding_mask,

need_weights=False)[0]

return self.dropout1(x)

# feed forward block

def _ff_block(self, x: Tensor) -> Tensor:

x = self.linear2(self.dropout(self.activation(self.linear1(x))))

return self.dropout2(x)

先看forward()函数,该函数是对x进行的加操作,所以不会改变x的维度,因此我们前面的假设是成立的,TransformerEncdoer类中堆叠的过程输入输出是一样的维度,并且就是通过for循环连续调用。

其中forward中的下面两行包含了Transformer Encoder层的所有运算逻辑:首先,输入与多头注意力机制的结果相加并输出结果,随后,上层的输出结果加上前向反馈的结果经过一个前向传播层(归一化的前向传播层),输出最终的结果。

x = self.norm1(x + self._sa_block(x, src_mask, src_key_padding_mask))

x = self.norm2(x + self._ff_block(x))

同时为了保持维度的同步,feed forward模块的操作如下:

def _ff_block(self, x: Tensor) -> Tensor:

x = self.linear2(self.dropout(self.activation(self.linear1(x))))

return self.dropout2(x)

linear1和linear2的初始化参数如下。结合__off_block操作可以看出,feed forward模块首先将词向量拉伸到dim_feedforward层,随后又用linear2从dim_feedforward层拉伸到原来的词向量维度(d_model)。

Implementation of Feedforward model

self.linear1 = Linear(d_model, dim_feedforward, **factory_kwargs)

self.dropout = Dropout(dropout)

self.linear2 = Linear(dim_feedforward, d_model, **factory_kwargs)

总结

至此,数据流在Transormer编码层的流动过程已经清晰,除了MultiheadAttention,其他的代码和层的设置都是pytorch中的基本操作,不再详细追踪底层实现。MultiheadAttention的代码实现在下节中进行分析。